Episode Transcript

[00:00:00] Speaker A: Foreign.

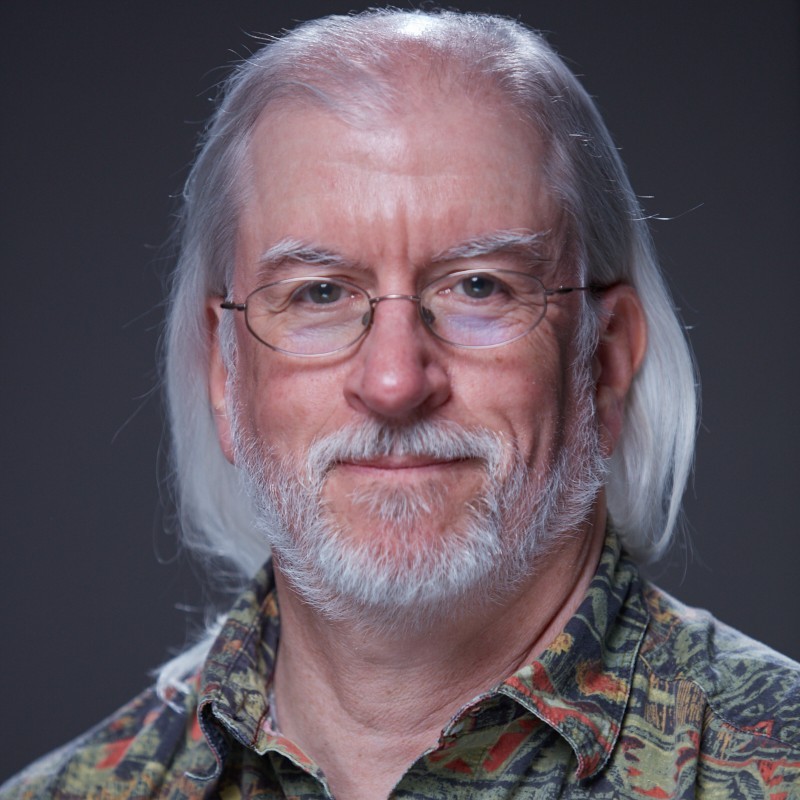

Hey everybody. Welcome to another episode of True DataOps. I'm your host, Keith Belanger, field CTO here at Data Ops Live, and Snowflake, Data Superhero. Each episode in this season we are really exploring the topic of AI and AI ready data. If you've missed any of our previous episodes, you can Visit us at DataOps Live YouTube channel. Subscribe you'll get notified for any upcoming episodes and when new ones are published. Subscribe I'm really excited today. My guest today is Mark Barlow. He's a colleague and principal sales engineer here at ddops Live. Welcome, Mark, to the show.

[00:00:38] Speaker B: Yeah, thanks for having me. Thanks for taking time out of your day to let me talk.

[00:00:45] Speaker A: Yeah, you and I have done a lot of talking. Yes, the air. So it's exciting to have this conversation here live with everybody else. But I know you well, but many people don't. So Mark, if you could take a moment just to give everybody a little bit of background about yourself and tell us who you are.

[00:01:00] Speaker B: Yeah. Mark Bartlow. I've worked in the IT sales software systems industry for many years now. Started at Unisys as a systems analyst where I worked on internal manufacturing and distribution systems and then progressed into advertising. I was a VP of Co op services and future technology and advertising.

Did a little bit of ERP implementation at the turn of the center century to solve Y2K problems and then various jobs. Product manager, technical sales. I was at a very large company for 11 years doing north American technical sales management.

Then went into the Hadoop world data replication as a senior director there and then last three and a half years at Data Ops Live doing sales engineering and a whole bunch of other tasks.

[00:01:55] Speaker A: I always find it interesting hearing everybody's data journey over the years and a lot of the overlap and differences that people bring. But today we are going to talk about skills and what the value is that they bring to the world of AI generated code. Now you and I have had lots of discussions over skills at participating at some of the data for breakfasts and just, just in, in general.

But Mark, before we get into skills, you know there's going to be a reason why we have skills. What is actually broken. You know, maybe that's a strong word today with AI assisted data engineering.

[00:02:35] Speaker B: I wouldn't say it's broken, but there are definitely issues that need to be resolved and resolved fairly quickly because with the advent, with the onset of this AI agent and if for lack of a better term, vibe coding, because there's a lot of elements of that in the gentex AI code generation world, you got a little bit of chaos there now for certain projects like developing your own system, like you hear of all the great feats of vive coding, that's great. But for businesses where value creation for the end customers, the consumer of your products, very important, well, not as great. And you have no governance, no standards, no domain expertise or not as much as you need and you get that vibe coding chaos. So what skills are going to do is address that.

[00:03:27] Speaker A: Yeah. And you know what's interesting is we could literally take the same exact prompt, go into an AI generated code and come up with two completely different developed pieces of code, right? Yep, that might be completely right. But to your point, from a, from a governance, a repeatability, a standard for your organization, neither may even pass that, you know, check for, for your organization.

[00:03:54] Speaker B: Absolutely, absolutely. I mean, this is how I got into using skills because I was doing the vibe coding thing with the data apps like platform in our development environment for the last, let's say year, six months or so. And then it got to the point where I really wanted to condense what I was showing in on occasions. And I said, boy, I just looked inside this cloud skilled thing and this is only about four, three, four, five months ago that this came out and I said if I could use skills, then I could get that repeatable process and domain expertise I need in my session. So done, I won't have to worry about it. And, and again, get to what I really want to do or really want to show or condense that down. And that's what skilled have helped me do with the AI code and the AI agents.

[00:04:49] Speaker A: You know, as a, somebody who has many years as a, you know, the lead architect for many snowflake implementations, one of the key things that was always stressful, you know, a stress point for me was how do you make sure many data engineers and all those participating in the development of your ecosystem that you have that level of, of consistency. Right. And that repeatability that goes along with it. And so skills is really bring, you know, that ability for somebody like myself as an architect to sit here and say, this is how I want it done, right or indifferent, this is the consistency that we need. And you can put those into the skills. But we're talking about skills. But let's take the step back. So Mark, what is a skill? Like let's really break it down so people can understand. What, what is it? What really is it?

[00:05:39] Speaker B: The best way to do that is actually show it perfect. So I would say we just go in and I do one of the problems that I demo to prospects and customers and I just run through it.

[00:05:51] Speaker A: Yeah.

[00:05:51] Speaker B: For the rest of this session here, and I'll show you what the skill is. I created a couple skills that I use and we have a whole bunch of other ones that come with what we call Metis, our development environment. But showing it is probably a better way of showing it, describing what a skill is.

[00:06:11] Speaker A: Perfect. Yeah. I can see your Snowflake screen, so take it away.

[00:06:15] Speaker B: So here's a production database, AAA data app, cp, the icd. You can see I have a service schema and a customer table. And a simple, somewhat simple problem. I want to add a C underscore status column to this table. And of course, if you ever follow the true principles of DataOps, you won't go and do this right in production. Of course you're going to do this in a sandbox. So I created this zero copy clone using DataOps Live.

And this is where I'm going to add that C status column. But without the data for the C status, it doesn't mean anything. And what that entails is loading the data with a stage. So you have to create a stage, creating a job to do that, creating an orchestrator configuration on the table to, say, get the data from that stage into this column, and then updating the pipeline, the CICD pipeline, to do all that. Those four or five steps were something that I used to kind of vibe code and I would get it right. Most of the time, I like to say I would cajole the right answer out of the AI agent and I never knew what I was getting. Now, did that make it a little bit exciting and fun? Yes. But to your point, for a business, it's not really the best outcome. You want that value creation to be more predictable. So let's go in and do that. What I did is in Data Ops Live, I ran this pipeline that basically created my zero copy clone, my feature branch environment, my sandbox, if you will. It's just called ODD column. You could see that if I want to observe what that job did, set up Snowflake, that's just taken the configuration infrastructure's code configuration and applying it to Sandbox. And you could see the first thing it did is it created that sandbox environment. Now, if something goes wrong and I don't get the result I want, I can easily start over, tear it down and start up again. Just like a container would be used in DevOps in software, very similar to that. And now you just literally simply go into the project, into the branch and then open this in develop. And that's what I did here. And I asked the question, prepping for this. What is a skill?

And here's what, here's what Metis said in the context of Metis, the Data Ops Live a skill is a specialized capability module that helps Metis, the AI assistant, provide targeted expertise for specific Data Ops Live tasks and workflows. Great. What that means in a nutshell is it provides domain expertise and very concentrated context for what you're going to create. And you could see there specialized domain structured format. And look at this. Skills are organized with consistent format, a name and description that defines when the skill should be used. It's in English. I call it programming English. Task oriented skills are designed to help with specific tasks like oh, surprise, surprise, creating stage configurations for days data ingestion. That's exactly what we're going to do here. That's exactly the problem I solved.

[00:09:40] Speaker A: And what's really key there, you know, Mark, is that every organization might have a different way they want to do those particular things that you're highlighting. You know, there's a million ways to do the same thing. You just want to make sure it's being done the same within your organization.

[00:09:58] Speaker B: Absolutely. And it is very much results oriented. The example I use getting really into the weeds for code is you don't care if it uses a for loop, a while loop or a repeat loop if it always gives you the right answer. But it's good if it can be more deterministic. And you could see here's the skills that are available in DataOps live. All of these are literally help you build what the seven principles of DataOps are in your project. And I created these 2. Add call stage for ingesting data from S3 buckets in the new columns and get column meaning for determining business meaning of table columns. So I added those two because I needed to. It was a necessity. So let's get right into it. So this one will just use the generic skills or context that Data App Slides provides out of the box. So the first thing I need to do is to add the column and then notice I'm not doing it going into the YAML anymore. I'm saying, I mean this is the way the world is going and I don't have to save Arch R1 because it will ask me, but as I use it, I know what to ask for not and then add column two. And now this is very interesting. I could literally pick the configuration file I want right in there and off we go. And now we're getting into what's similar to a vibe coding world. I call it more agentic AI development.

And now it's going off and it's saying, hey look, I want to edit this file. I want. I'll have to add this column and boom, it's done. Now something as simple as that, you'd be surprised sometimes how long that could take to do with these type of configurations. This whole process I'm going through right now, I didn't do it for a while about four or five, six months ago.

And to get it right took me an hour and 20 minutes.

[00:12:06] Speaker A: Right.

The old fashioned way. Or should I say how you did it last year?

[00:12:11] Speaker B: How we did it literally in. In computer years. 25 years ago in tech.

[00:12:16] Speaker A: Yeah.

[00:12:16] Speaker B: So. So. But now. So I got my C status here and actually let's. It's probably going to add it at the. At the back of it. You know what, let's do a little Vibe coding. See, I. Before I go, I'll say.

Fix the C status column to be at the end the.

And this is an example of that type of vive coding type thing because it put it in the.

You put it in between and it was going to put it at the end anyway. That's just the way Snowflake works. But I'm a little bit picky on that. And now I got it the way I want. But this is a good example because this is the way you work in this world today.

[00:13:14] Speaker A: You know what and you just brought up. That's what you just did. Is another even thing you could define inside your. Your skill. Right. To say, hey, all new columns must be added to the end. Yes. Right. When you have a certain thing that you want.

[00:13:28] Speaker B: I could. And this is generic enough where it's going to get it right all the time. And like I said, it's easy enough to fix. Now let's do. And I'm going to force it to use the skill now. So this is the first skill we're using here. And then after the I get this done, I'll go into the actual skill and show you what the programming English looks like in this skill using Metis skill. And again I could find the skill. So I'm going to force it and I'll just type. Start typing something like ADCOM stage.

I'll say ingest data from public bucket. So an S3 bucket. And I'll give it the name because it will need to know. This is almost like replacing a form that you would fill out file. I'll tell which file because this has the new C status data in it. And then I'll say add, include new job, because it has to create a job and add it to the pipeline. And pipeline.

And this is the part where I used to vibe code and do each one of these separately and like I said, cajole it into giving me the right answer.

Now, with the skills, it should find the skill and say, I know how to do this. And look, it's going into the meta skills and finding the assets and getting an idea of what it's going to have to do here.

[00:15:00] Speaker A: Yeah. And having been going back in the past, like, oh, I got to get it from the file and then I got to get it to a stage and I got to create a pipeline to do that, and then I got to get it from there to the final end destination, which could be a lot of steps manually to do. Yeah, right.

[00:15:14] Speaker B: And now you get the proverbial.

[00:15:16] Speaker A: And it's telling you right there what it's doing. Right, those steps.

[00:15:18] Speaker B: Absolutely. And I used to do these separately and that used to be the bane of my existence because it forgot for us. Now look, it wants to make a directory change. So I add, add, add a directory. It did that.

And now look, boom, the code is generated for that stage configuration there.

I mean, this is, I mean, this, this is the part that still appears to be magic to me on a certain level that it's able to do this so well. Now it's going to go into the customer table and using the skill, figure out how to make it orchestrate that data there. So it took the has to see status announces, use that stage, get that file and orchestrate it in for you. Those of you aren't familiar with DataOps Live, that's the way we could do stage ingestion from Object Storage. Now it's going to make a directory for my job.

[00:16:12] Speaker A: Yep.

[00:16:13] Speaker B: And now it's going to have to do that because it has to know how does the job. And there's a series of orchestrators that Data Apps Live has, and one of them is stage ingestion. Take data from a stage and add that stage to the job. And there, look, it understands the environment variables, the context. So think of domain expertise and context.

And that's what these skills are doing. And we're like three quarters of the way there now. And you notice I haven't touched anything. And now it's going into the full cycle. And this is because I set up the skill to do this and there

[00:16:47] Speaker A: it goes, you know, I can remember sitting there.

[00:16:50] Speaker B: Yeah.

[00:16:51] Speaker A: You know, we're talking about a skill years ago and sitting there saying, I wrote a document, or how you're going to take data from a file and bring it to a stage. And then you would sit in front of a whiteboard with a team of data engineers and you'd make sure everybody understood it.

You know, now, as a seasoned person, like, or people who are listening, if you're inexperienced, you're a lead, you're the architect. Now you really want to put your. Your stamp on those skills to make sure when people are vibe coding and doing this type of stuff, it's being executed the way you want it to be done.

[00:17:21] Speaker B: It's not going to be uncommon, I think, for people to take the skills they create with them wherever they go. And it's going to be like, you know, here's the skills I have with me. Not only your personal trait skills, but the skills you actually. And now look, and this is what I love. It did those steps and it explained exactly what I did. And now not really in having to know git anymore because we store these in a repo. I could say commit and sync changes.

I'm learning git more this way than I did.

[00:17:57] Speaker A: You're seeing the commands.

[00:17:59] Speaker B: Yes, because I see the commands all the time now. Of course, I knew it and all, but I would always get one of these things wrong.

[00:18:06] Speaker A: Wrong.

[00:18:06] Speaker B: And now this is just becoming commonplace where, look, it's adding a commit message based on what it did.

[00:18:14] Speaker A: And now what's great too, is that this isn't like an autonomous thing that just happened, no happening, and you're blind, is that you're seeing what it's doing. You could stop it, correct it, change direction.

[00:18:25] Speaker B: Absolutely.

[00:18:26] Speaker A: Something that isn't right. So it's kind of like it's. AI is like you said, AI assist with human. Right. You know, interaction to, to make sure it's. It's getting right back to what you said about taking, you know, your skills with you. You know, I can remember for many years I was consulting with, with Snowflake, and now I would go to a new company, say, hey, do you have certain standards? Like, boop. Yep. I would pull it out of my back pocket, say, this is how I do a slowly changing dimension. Or this is how I do this. Which might be the same as other architects or different. Everybody has their way. But to your point, it's. This is how I've had success. And you can take those with you.

[00:19:05] Speaker B: Yeah. And then, I mean, Literally it created those, it created those changes for me here. So it should have, if everything's going well, it should have kicked off a CIC pipeline and it did see add C status column and it automated that pipeline and we should see an extra job in there. We should see in the snowflake setup, not only that the column was added, but it ingested data from the stage there. Now let's look at the skill.

[00:19:36] Speaker A: Actually, actually before you go on just to really plug here, people understanding is like not only did you just build that pipeline, but back to the true data ops. You have all of the audits, you have all of everything. You need the documentation, everything has already been captured. You don't have to worry about. I'm going to come back to that later. Right, right.

[00:19:57] Speaker B: Exactly right.

Here's the pipeline running and while this runs and this will take a few minutes, I'll actually go a little bit more into. And you could see if we go into the repo, you could see the commit here for the, for the branch again, remember the sandbox? We're doing the sandbox and if you look, that's the one I just added. And there's the diffs. I mean this is just beautiful with the, with what it did. But let's go and look at the skill now because these are what come. They're standard ones that come and there's just a dot meta skills directory. So they're versioned too. They're version. And you notice the ones that came out of the box were just dad skilled. These are the ones I created. So let's look at the adcom stage. All skilled. So what they are actually physically what they are, they all have a skill MD file. And this is just again programming English. And this what they call format or header piece of it is required where you name the skill and the description. This description is very important because if I didn't force it to use the skill, you it would use the skill based on this description. When a user asks to ingest data from an S3 bucket to load data in a new column, create a stage configuration, a stage ingestion job and update full cycle to run the job. If it sees that and it's a match, it does what calls progressive disclosure, says okay, I will use this and then it starts loading the skillmdash. Now why is this important?

From a geeky technical reason? It's important because it limits the number of tokens you use. Instead of loading a whole bunch of context that's going to blow your context window out that's this thing right here. Instead of doing that, it will load this and then it's English. When to use the skill. Here's the location, here's the configuration and the quick references are very important at the bottom here.

Use stage creation, use reference this MD stage ingestion job. Use this table. Orchestrators use this ready to copy asset examples. So you could give examples in the assets directory. So you look at these and this is like what I would call a full open skill standards. There are open skill standards and this adheres to those standards. So here's an example of the load job here. Stage configuration and how to do them. Examples that will use as templates. So almost think of them as a new form of a user interface you're building for somebody and some assets, like for example, an example of what that configuration looks like. So the model can use that context and that domain expertise to build it. Now let's see if our pipeline finished. Kind of like a cooking show here. Right?

[00:22:57] Speaker A: Well the other thing too add on to that. What's also great about the skills, like you said, is you don't have to be a highly technical person. You just have to understand your process, what you want to do. It's like your code is your documentation. It's like all one in one in the same.

[00:23:12] Speaker B: Yes. It becomes and this is what's going to lead to hopefully a future true data ops cast is that the specification becomes the source of the truth and specifications will use well defined skills as part of a platform and that hierarchy will be more important to understand the intent. It'll be intent driven development. So let's look what happened here in Snowflake quickly. I'll see that it should have added the new column. It did and it added that stage to ingest it.

Then it added this job and this is where you always got tripped up. This is what always would take many times. And it loaded the data successfully in my feature branch. If I go to the feature branch now and I see refresh this thing, I'm just going to go and view the table here and refresh it and voila, we have the C status. That literally took 15, 10, 15 minutes. That again took me an hour and 22 minutes to do like four months ago. And you see C status has a value A B and I believe a C. Wouldn't it be great if we could ask Mattis what does that C status column really mean?

[00:24:32] Speaker A: Right.

[00:24:32] Speaker B: So let's use a skill and do that now since we have this branch, let's ask this let's say and I'll force it to use a skill using Metis skill at. And I'll type meaning and so I get column meaning in the.

You know, I'll just say in. Let's say in. Again programming English in current database environment.

Tell me why.

I'll say newly added and again programming English in context. C Status column contains values A, B or C.

Now this is something I've been hoping for for like literally years and years. Just be it. Now look, it's smart enough based on the skill to say get the environment.

And look it got the feature branch environment there. Now what I constructed it to do is run some snow SQL commands. So I'm managing tools with skills different from mcp. MCP and skills are both needed. But if everything's local, skills might be better answers for you. Let me query more stuff. So it's querying more stuff here

[00:26:01] Speaker A: man the way we used to do data profiling and stuff years ago. Yeah, separate tool connect this. Right. Some sprites.

[00:26:07] Speaker B: Look at that. I mean it's breaking it down. And this is where I say it's a great learning experience because it's breaking it down the way a very good analyst or architect or data analyst would break it down. So it's getting the values for A, B and C here. Now let's look at something else. Okay. It's doing some type of average,

[00:26:30] Speaker A: you know, in, in kind of again going back to you know, the pillars of data ops is. Yeah, take what's happening here. And you showed that pipeline. You could sit there and say well let's test to make sure after every run we only have A, B and C. Let's all of these things that really make sure that you're, you're, you're not drifting right when.

[00:26:52] Speaker B: Yeah.

[00:26:52] Speaker A: I mean your data that's going to affect your, your AI whether you're doing ML or any other things downstairs, downstairs. Downst from your, from these pipelines is it's just cyclical thing. You could build your test not just when you're creating it but your continuous running of your pipelines.

[00:27:12] Speaker B: Well look what it did. It said after analyzing. Here's what I got. C status means status. A premium customers balance range average bounce. These are high value customers with largest account represents in good standing. B average customers standard customers regular having a positive balance average balance represents the military and then C negative bounce customers with NEV represents customers who may require credit collection actions. And look at the business significance.

I mean and I don't know I'm going to do I like it so much. I'm just going to say again, this is a little bit of vibe coding.

Save this analysis as documentation.

In an MD file.

There you go. And now it's going to go off and change and save this as part of the change. So look, it's going to make a directory called Docs for me in my repo.

[00:28:18] Speaker A: Yep.

[00:28:19] Speaker B: And now it's going to basically build out, I think it's going to build out what it just said there and incorporating and understanding the change.

But that. And again, I will show that skill because it was a different approach to this skill and I'll show that. But look at that. I mean, and it gives even example query how to do it. Now it's going to go off because it understood what I did before. It's adding the git and I mean this is just the lazy person's nirvana, basically.

[00:28:54] Speaker A: These are all the things, Mark, that, you know, when I was at, we were always you got to deliver the business need to deliver. You would skip something, right? Yes, you would always the documentation or you skip thorough testing because you need to get to the business. Here you've skipped nothing. And in reality you're adding above and beyond. And those people who are in highly regulated, whether it's banking, financial services, insurance, health care, that level of depth is necessary for those types of industries.

[00:29:28] Speaker B: Absolutely. And now look, it's running the pipeline again.

The Automation, the DataOps automation platform. You never could forget. That's what's at the core of DataOps Live, the automation. This is all about automation, but it's improving automation. Now let's look at that last skill here. And now of course, we would merge this to the upper environment afterwards. That's the governance, that's the rules.

But let's look at the skill for get column meaning. And you notice I didn't use any of the directories or anything else. All I did was describe when a user wants to determine what a table's column means in business terms.

For example, question when to use a skill. I say use this command to get the current environment because that's important and that means you'll work when you move it to prod, because it will do that in prod, I mean, or whatever environment. And now I just say here, use Snowflake's snow cli to query the example, query the database. And then here's an example of a query. And then I just say very simply, elaborate on why the value of the column has its derived value. That's it. And that gave me the answer I want. And now if we look into our Metis, there's our docs directory and look

[00:30:50] Speaker A: at that, there's a documentation.

[00:30:52] Speaker B: Look at that. I mean, that is just incredible. I mean this is literally that last piece I did can be two to three days worth of work.

[00:31:03] Speaker A: Oh, easily. And sometimes, you know, I. We would have an agile squad of people doing different parts of this. Right. In working together. But you know, unfortunately, Mark, I know you and I go on for hours and hours doing this and I think hopefully we've given people a teaser here of, of what, you know, skills can do. You know, how you can take those repeatable things and then everybody can leverage them. But if you were to say, hey, what would you want people to take away from today? Like one thing that you would want people to take away from.

[00:31:35] Speaker B: Yeah, we're on actually a couple things. One thing would be start using it and see what works for you. This didn't work on my first crack. I mean, if you looked at my commit history here, I made a lot of changes to get these right and I actually simplified them. I started probably too complex and I narrowed them down. So that's one thing, so that's certainly important to experiment and see what works for you. But the other thing is the agency keep on top of this, the continuous learning, keep doing this. This is going to continue to evolve quickly. Stay on top of it, See what works, see what you could incorporate in your environment to again create that business value, that value creation. That's what we're talking about here, accelerating value creation. And that's it. So, Keith, it's been a pleasure joining this podcast.

[00:32:34] Speaker A: This has been exciting. I've definitely enjoyed it. Mark, you know, if anybody wants to learn more, see more, talk with us more, definitely, please reach out. We're happy to give a personal demonstration or how this could work with your organization.

But again, Mark, thanks for joining us and for everybody else, thanks for paying attention, for watching and hope to see you next time. Thanks everybody.

[00:32:58] Speaker B: Bye bye.